Misinformation Countermeasure Selector

Choose the misinformation scenario

Each approach has different strengths based on the type of misinformation:

Content Moderation

Platforms removing false content through warning labels, downranking, and account removal

65% effectiveness

Media Literacy

Teaching people to recognize and evaluate information

58% effectiveness

Civic Education

Building community understanding of information systems and civic responsibility

45% effectiveness

Recommended Approach

Select a scenario to see recommendations

Each scenario has different optimal countermeasures based on research from MIT, New Mexico studies, and platform data.

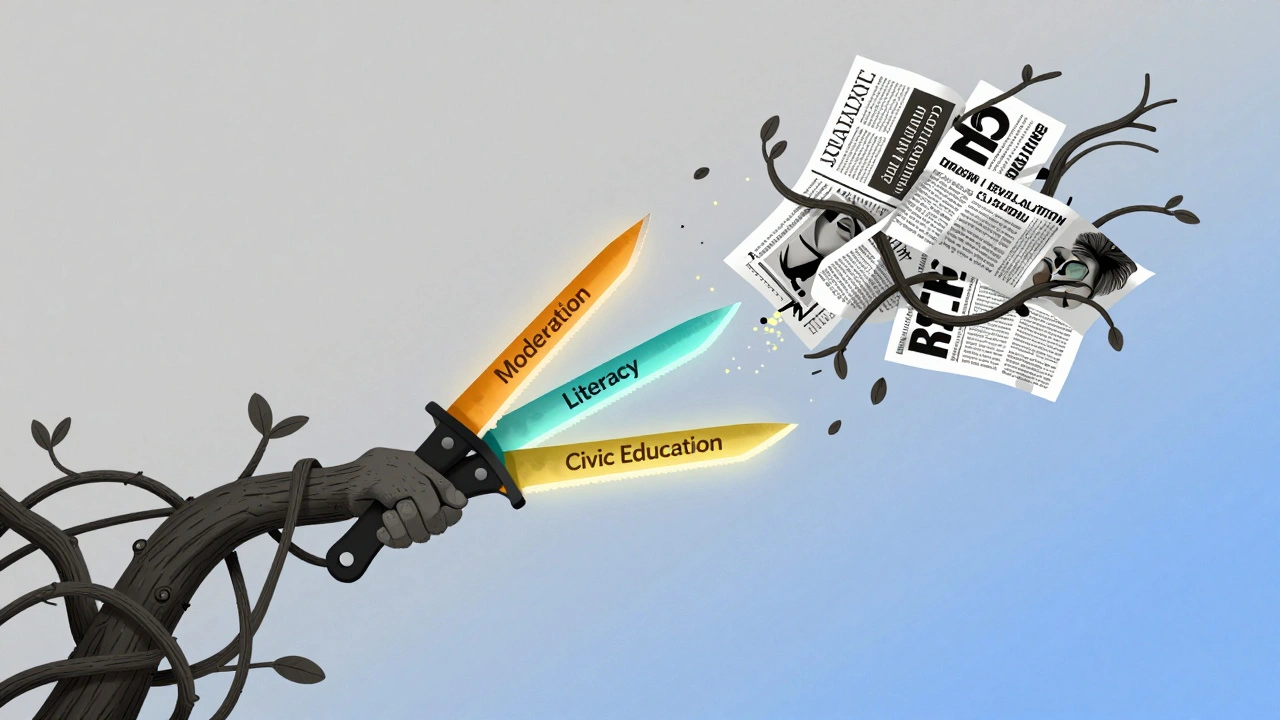

False information spreads faster than truth. It doesn’t matter if you’re scrolling through Instagram, checking a news headline on your phone, or seeing a meme in a family group chat - misinformation is everywhere. And it’s not just annoying. It changes votes, fuels panic, and even costs lives. So how do we stop it? The answer isn’t one trick. It’s three things working together: content moderation, media literacy, and civic education.

Content Moderation: What Platforms Actually Do

Social media companies don’t just sit back and watch misinformation spread. They have teams, algorithms, and rules designed to pull down false content. But what works? And what doesn’t?Most people think fact-checking is the main tool. And yes, warning labels on false posts do help. A 2023 MIT study found that when users saw a simple label saying "This post contains false information," belief in the claim dropped by 27%, and sharing dropped by 25%. Even more surprising - it worked for people who didn’t trust fact-checkers. Their belief still fell by 13%.

But labels are just the tip of the iceberg. The real power lies in what platforms do behind the scenes: downranking, deplatforming, and account removal. Downranking means pushing false content so it doesn’t show up in feeds or search results. It’s like hiding a rumor in the back of a library instead of shouting it in the middle of a room. Studies show this works better than trying to change people’s minds after they’ve seen the lie.

Deplatforming - banning entire accounts - is the most controversial move. Think of it as removing a loudspeaker from a public square. But here’s the thing: academic research barely studies it. In fact, a review of hundreds of studies from 2016 to 2021 found not a single one looked at deplatforming. Meanwhile, platforms like Meta, X, and YouTube use it constantly. That’s a huge gap between what’s done and what’s proven.

Public opinion helps clarify what’s acceptable. When researchers asked people what to do with false posts, they found clear patterns. Holocaust denial got removed in 71% of cases. Election lies? 69%. Climate denial? Only 58%. Why? Because people see some lies as more dangerous than others. The more harm a lie could cause - like stopping people from getting vaccines - the more likely they are to support removal. And repeated offenders? They get hit harder than first-time offenders. Your political views? Your follower count? Those didn’t matter. Only the content and its potential damage did.

Media Literacy: Teaching People to Spot Lies

You can’t moderate every post. There are too many. That’s why teaching people to think critically is just as important as removing content.Media literacy isn’t about memorizing fake news headlines. It’s about learning how to ask: Who made this? Why? What’s missing? Where’s the evidence? A 2024 study found that people trained in media literacy were 30% better at spotting manipulated images and false claims - even when they were emotionally charged.

One of the most powerful tools is prebunking. Instead of waiting for someone to see a lie and then correcting it, you warn them first. Think of it like a vaccine. You show people a small dose of misinformation - like a fake headline - and explain how it was made. Then, you show them the real facts. People who go through prebunking training are less likely to fall for real misinformation later. It’s not magic. But it’s science.

And it works across groups. Whether you’re a teenager on TikTok or a retiree on Facebook, training helps. A program in New Mexico schools taught students to trace the origin of viral videos. Within three weeks, students were 40% more likely to check sources before sharing. One student told her mom, "This video says the moon landing was fake. But the shadow angles don’t match sunlight patterns. That’s impossible." That’s media literacy in action.

Civic Education: Building a Stronger Information Foundation

Media literacy helps individuals. Civic education changes the whole system.Most people think civic education is just about voting or government structure. But in today’s world, it’s also about understanding how information flows - who controls it, how it’s monetized, and why some voices get louder than others.

Take news ecosystems. Local newspapers are dying. That leaves communities with fewer trusted sources. When people can’t find reliable local news, they turn to social media. And social media thrives on outrage. That’s why false claims about school boards or public health spread so fast in towns without strong journalism.

Civic education that includes media literacy, journalism ethics, and digital rights gives people the tools to demand better. It teaches them that misinformation isn’t just a personal mistake - it’s a system failure. When people understand how algorithms profit from lies, they start asking for change. They pressure platforms. They support independent journalists. They vote for policies that require transparency.

And it’s working. In Albuquerque, a civic education pilot in community centers taught adults to identify misinformation about healthcare and housing. After six months, participants were twice as likely to contact local officials about false claims they saw online. That’s not just awareness - that’s power.

The Big Gap: What We’re Not Studying

Here’s the uncomfortable truth: we’re studying the wrong things.Researchers spend most of their time on fact-checking and warning labels. But platforms are doing far more. They’re changing how money flows - cutting ad revenue from false content. They’re limiting how fast posts spread. They’re blocking bot networks before they go viral. And none of that is being studied properly.

Worse, almost no one is studying the people who create misinformation. Who are they? Why do they do it? How do they adapt when platforms change rules? If we don’t understand the source, we can’t stop the flow.

And what about long-term effects? Most studies measure impact over hours or days. But does media literacy stick? Does a ban on a fake news account make someone rethink their beliefs - or just move to a different platform? We don’t know.

What we need is more data sharing. Platforms should give researchers access to real, anonymized data on how misinformation spreads. Not just on Facebook, but on TikTok, Telegram, Discord - everywhere. We need longitudinal studies. We need to test interventions on real users, not just in labs.

What Works Right Now?

So what should we do today?- Support warning labels - they’re simple, cheap, and effective, even for skeptics.

- Push for downranking - hiding false content works better than arguing with people after they’ve seen it.

- Invest in prebunking - teach people how lies are made before they see them.

- Expand civic education - schools and community centers should teach how information systems work, not just how to vote.

- Demand transparency - platforms should publish clear rules on moderation, and explain why some posts are removed and others aren’t.

There’s no silver bullet. But combining these approaches - moderation, literacy, and education - gives us the best shot.

Why This Matters in 2026

We’re not just fighting lies. We’re fighting the erosion of shared reality. When people can’t agree on basic facts - whether it’s climate data, election results, or public health - democracy weakens. Communities fracture. Trust disappears.And it’s not just about politics. Misinformation kills. False claims about vaccines led to preventable deaths. False rumors about food shortages caused panic buying. False stories about schools led to threats against teachers.

Stopping misinformation isn’t about censorship. It’s about building resilience. It’s about giving people the tools to think for themselves, the systems to support truth, and the power to hold platforms accountable.

Do warning labels really work if people don’t trust fact-checkers?

Yes. A 2023 MIT study showed that even among people who distrusted fact-checkers, warning labels reduced belief in false claims by 13% and sharing by 17%. The label itself - not the source - is what changes behavior. It’s a nudge, not a lecture.

Is content moderation biased against certain political views?

Research shows it’s not about politics. When people were asked to decide whether to remove a post, their own political beliefs didn’t matter. What mattered was the type of misinformation and how harmful it was. Holocaust denial was removed more often than climate denial - not because of ideology, but because the harm was clearer. Partisanship and follower count had no effect.

Can media literacy training help older adults?

Absolutely. A 2024 pilot in senior centers in New Mexico found that adults over 65 who received four hours of media literacy training improved their ability to spot false information by 35%. They learned to check dates, reverse-image search photos, and look for trusted sources. Age doesn’t make you immune - but training does.

Why isn’t deplatforming studied more?

Because researchers rely on public data, and platforms don’t share it. Deplatforming is a black box. We know it’s used heavily - but we don’t know if it stops people from spreading lies elsewhere, or if it just pushes them underground. Without access to real data, we can’t measure its impact.

What’s the most effective single strategy?

There isn’t one. But if you had to pick, downranking false content before it spreads is the most effective. It stops the problem at the source instead of trying to fix beliefs after the fact. Pair that with prebunking and civic education, and you create a layered defense.