AI Fraud Risk Calculator

Calculate Transaction Risk Score

Risk Assessment Results

By 2026, AI fraud detection isn’t just a tool-it’s the backbone of financial security. Banks that still rely on rule-based systems are losing millions. Fraudsters aren’t waiting. They’re using AI to create deepfake voices, forge documents, and launch coordinated attacks across borders. If your fraud system can’t keep up, you’re already behind.

How AI Sees Fraud Differently

Traditional fraud systems look for red flags: a $10,000 transfer from a new country, a login from a different city, a sudden spike in purchases. But these rules are static. They don’t adapt. And fraudsters know exactly how to slip through. AI works differently. It learns from billions of transactions. It knows what normal looks like for each customer-not just in general, but for that person. If you usually pay your rent on the 1st, spend $45 at a coffee shop every Tuesday, and never use your card after midnight, the AI builds a profile around that. When a transaction breaks that pattern-say, a $2,000 payment to a new vendor at 3 a.m.-it flags it. Not because it matches a rule. Because it deviates from behavior. Modern systems analyze over 200 variables in milliseconds: device fingerprint, IP location, keystroke rhythm, voice tone on phone banking, even how long you pause before entering your PIN. This isn’t guesswork. It’s pattern recognition at scale. HSBC found four times more fraud after switching to AI. DBS Bank cut false positives by 60%. That’s not luck. That’s data.Multi-Modal Detection: More Than Just Numbers

AI fraud detection in 2026 doesn’t just look at transactions. It watches people.- Behavioral biometrics track how you type, swipe, or hold your phone. A fraudster can steal your password, but they can’t copy your typing speed.

- Computer vision scans ID documents during onboarding. It spots fake passports, edited driver’s licenses, or synthetic identities made from stitched-together faces.

- Voice recognition listens to your call with customer service. If the voice matches your profile, you’re let through. If it’s a cloned voice from a deepfake, the system pauses the call.

- NLP scans chat logs and emails for phishing attempts. It doesn’t just look for the word "password"-it notices if someone’s asking for "your 2FA code" in a tone they’ve never used before.

- Graph neural networks map connections between accounts. If 17 different accounts all use the same device, same IP, and transfer money to the same shell company, the system sees a network-not just individual fraud.

Real-Time Prevention: The Race Against Seconds

The biggest shift in fraud prevention? It’s no longer about catching fraud after it happens. It’s about stopping it before it clears. With FedNow and instant payments, money moves in seconds. If your system takes 10 minutes to flag a transaction, it’s too late. AI systems now analyze millions of transactions per second. They assign a risk score to each one in under 100 milliseconds. Here’s how it works:- A customer tries to send $8,000 to a new recipient.

- The AI checks: Is this their first transfer to this recipient? Have they ever sent money at this time of day? Is the recipient’s bank flagged for past fraud? Is the device they’re using linked to 12 other accounts?

- Based on all signals, the system gives it a 92% risk score.

- Instead of blocking it outright, it triggers "smart friction"-a quick video verification or a push notification: "Did you mean to send $8,000 to XYZ Corp?"

- If the user confirms, the transaction goes through. If not, it’s paused.

Risk Scoring: From Guesswork to Precision

Fraud detection is just one part. Risk scoring is the engine behind lending, credit limits, and account approvals. AI-driven risk scoring doesn’t just look at credit scores. It looks at:- How consistently someone pays bills-not just on time, but how much they pay above minimum.

- Whether their income pattern matches their spending habits.

- If their job history shows stability or frequent gaps.

- How they interact with the bank’s app-do they log in at the same time every day? Do they use the same device?

The Black Box Problem: Why Explainability Isn’t Optional

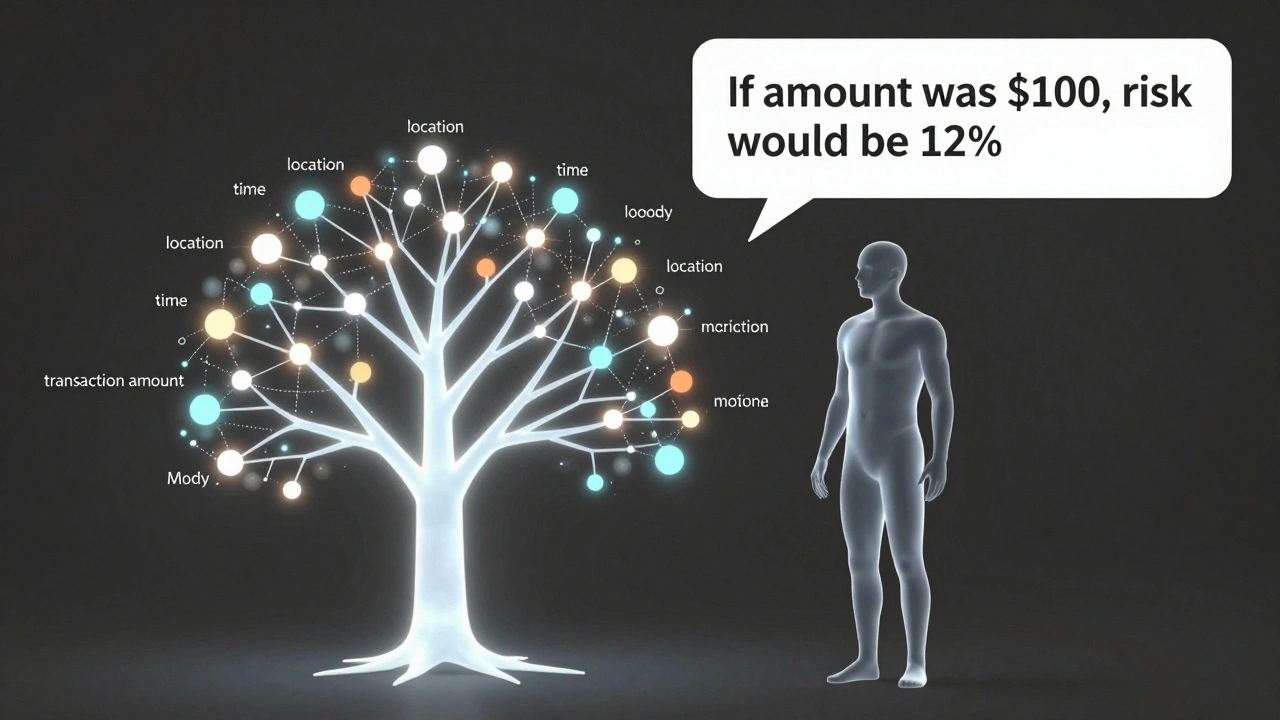

Here’s the catch: AI can be brilliant at spotting fraud. But if it says, "We blocked this transaction because the model thinks it’s risky," and can’t explain why… that’s a legal and trust crisis. Regulators in the U.S., EU, and beyond now require financial institutions to explain AI decisions. Not just to auditors-to customers. If your loan is denied, or your card is frozen, you have a right to know why. That’s where model explainability comes in. It’s not about making AI "simple." It’s about making its reasoning transparent. Leading banks now use techniques like:- SHAP values to show which factors (like location or transaction amount) most influenced the decision.

- Counterfactual explanations-"If your last transaction had been $100 instead of $5,000, the risk score would have been 12% instead of 89%."

- Local interpretable models that create a simplified, human-readable version of the AI’s logic for each individual case.

The Arms Race: AI vs. AI

Fraudsters aren’t sitting still. They’re using AI too. They train models to mimic human behavior. They test their scams against banks’ detection systems to find weaknesses. They use generative AI to create synthetic identities that look real on paper-and in video calls. This means banks can’t just deploy AI once. They have to keep evolving it. Every fraud pattern that’s caught becomes training data for the next version of the model. Every new deepfake technique gets added to the detection list. The most successful institutions now treat fraud like a living system. They monitor it daily. They retrain models weekly. They collaborate with other banks to share anonymized fraud signals. No single bank can stop this alone.What Comes Next

The future of AI in financial services isn’t just about stopping fraud. It’s about predicting it. Imagine a system that notices a customer’s behavior is slowly shifting-smaller purchases, later logins, more frequent password resets-and quietly reaches out: "We’ve noticed some unusual activity. Want to check your security settings?" Before fraud happens. Before the customer even knows something’s wrong. That’s the next frontier. Real-time behavioral intelligence. Continuous adaptation. Human-AI collaboration. The banks that win won’t be the ones with the most powerful AI. They’ll be the ones that build explainable, adaptive, and collaborative systems. Systems that protect without alienating. Systems that learn faster than fraudsters can evolve.Frequently Asked Questions

Can AI fraud detection ever be wrong?

Yes, but far less often than older systems. AI still generates false positives-transactions flagged as fraud when they’re not. But modern systems reduce these by 60-90% compared to rule-based tools. The key is continuous learning: as customers update their behavior, the AI adapts. A customer who suddenly travels abroad won’t be blocked forever. Their profile updates. The system learns.

Do I need quantum computing to use AI fraud detection?

No. Most banks today use advanced machine learning on cloud-based systems, not quantum hardware. Quantum-enhanced AI is still experimental and used by only a few elite institutions. The real advantage isn’t hardware-it’s data quality, model design, and real-time processing. You don’t need quantum computing to build a highly accurate fraud system. You need clean data, smart engineers, and a culture of testing and iteration.

How do banks ensure AI doesn’t discriminate against certain customers?

Regulators now require fairness audits. Banks test their models across demographics-age, income, zip code, ethnicity-to ensure no group is unfairly flagged. If a model consistently blocks transactions from certain neighborhoods, it’s flagged for review. Many institutions now use fairness-aware algorithms that actively correct for bias during training. Transparency and ongoing monitoring are mandatory, not optional.

What happens if an AI system is hacked?

AI models are protected like any other critical system. They’re isolated in secure environments, encrypted, and monitored for tampering. Most banks use adversarial training-training the AI to recognize and resist manipulation. If someone tries to feed fake data to confuse the model, the system detects the attack and shuts it down. The bigger risk isn’t hacking-it’s model drift. If the AI stops learning from new data, it becomes outdated. That’s why continuous retraining is built into every system.

Can small banks afford AI fraud detection?

Yes. Cloud-based AI platforms now offer fraud detection as a service. Small banks don’t need to build their own models. They can subscribe to third-party systems that handle the infrastructure, training, and updates. Some providers charge as little as $0.01 per transaction analyzed. For a regional bank processing 50,000 transactions a day, that’s $500 a day-far less than the cost of one major fraud incident.