Edge AI Latency Calculator

Edge AI eliminates cloud delays by processing data locally. This calculator shows how much time you save by running AI directly on devices like smartphones, cars, or factory sensors. Based on the article's examples: Cloud AI typically takes 200ms+ for critical decisions, while Edge AI cuts this to under 10ms.

Self-driving cars need under 10ms response to avoid accidents. Cloud delays could be fatal.

Cloud AI Configuration

Edge AI Configuration

Latency Comparison

Why This Matters

200ms delay in self-driving cars can mean the difference between avoiding a collision and a fatal accident. Edge AI's sub-10ms response makes real-time decisions possible.

In manufacturing, every millisecond saved reduces downtime costs by thousands of dollars. Edge AI enables predictive maintenance that catches failures 48-72 hours early (as shown in the article).

By 2026, your phone doesn’t just recognize your face-it understands your hand gestures before you finish them. Your car doesn’t wait for a cloud server to tell it to brake-it already has. And in a factory thousands of miles away, a machine is fixing itself before anyone even notices it’s broken. This isn’t science fiction. It’s edge AI-artificial intelligence running directly on the device, with no need to send data back to the cloud. And it’s changing everything.

Why Edge AI? Because the Cloud Isn’t Fast Enough

For years, AI ran in the cloud. Your voice command went to a server, got processed, and came back. But that delay? It adds up. In a self-driving car, a 200-millisecond lag can mean a collision. In a factory, waiting for a cloud response means a $10,000-per-minute shutdown. Edge AI cuts that delay to under 10 milliseconds by doing the math right where the data is born. It’s not just about speed. Sending raw video, sensor readings, or voice clips to the cloud eats bandwidth, drains batteries, and risks privacy. Edge AI keeps that data local. Your health monitor doesn’t upload your heart rhythm-it analyzes it on your wrist. Your security camera doesn’t stream every frame-it only sends a alert when it spots a face it doesn’t recognize.The Hardware Behind the Quiet Revolution

This isn’t just software. It’s new chips. Traditional GPUs used in data centers are power-hungry beasts. Edge devices? They run on coin-cell batteries or solar panels. So manufacturers built something better: Neural Processing Units, or NPUs. NPUs are designed for one thing: running AI models with minimal energy. They use 10 to 20 times less power than a GPU. That’s why your smartphone can run facial recognition all day without killing the battery. And it’s why a sensor bolted to a wind turbine in rural Wyoming can last 18 months on a single charge. Even more advanced are neuromorphic chips-hardware that mimics how neurons fire in the human brain. These chips don’t just process data; they learn patterns the way a person does, using fractions of a watt. Companies like Intel and Qualcomm now embed these into industrial sensors, drones, and medical wearables. The result? Devices that think, adapt, and act-without ever needing Wi-Fi.Small Language Models: AI That Talks to Workers, Not Servers

You’ve heard of ChatGPT. Now imagine a version of it that fits inside a tablet on a factory floor. That’s a Small Language Model (SLM). These aren’t massive 100-billion-parameter models. They’re lean, focused, and trained on specific tasks: reading error codes, explaining maintenance procedures, or translating safety manuals in real time. In a pharmaceutical plant, a worker taps a button on a tablet when a machine flashes a code. The SLM on the device instantly pulls up the fix-no cloud connection needed. No lag. No downtime. This matters because many factories still operate in areas with spotty internet. Edge AI with SLMs turns every worker into an expert, even if they’ve never seen that model before. These models are also getting smarter. A camera on a robot arm can now see a misaligned part and say, “Replace the bearing on axis 3.” It’s not just vision-it’s vision plus language, all running locally. That’s the next leap: vision-language models at the edge, turning machines into teammates.Manufacturing: The Quiet Factory That Fixes Itself

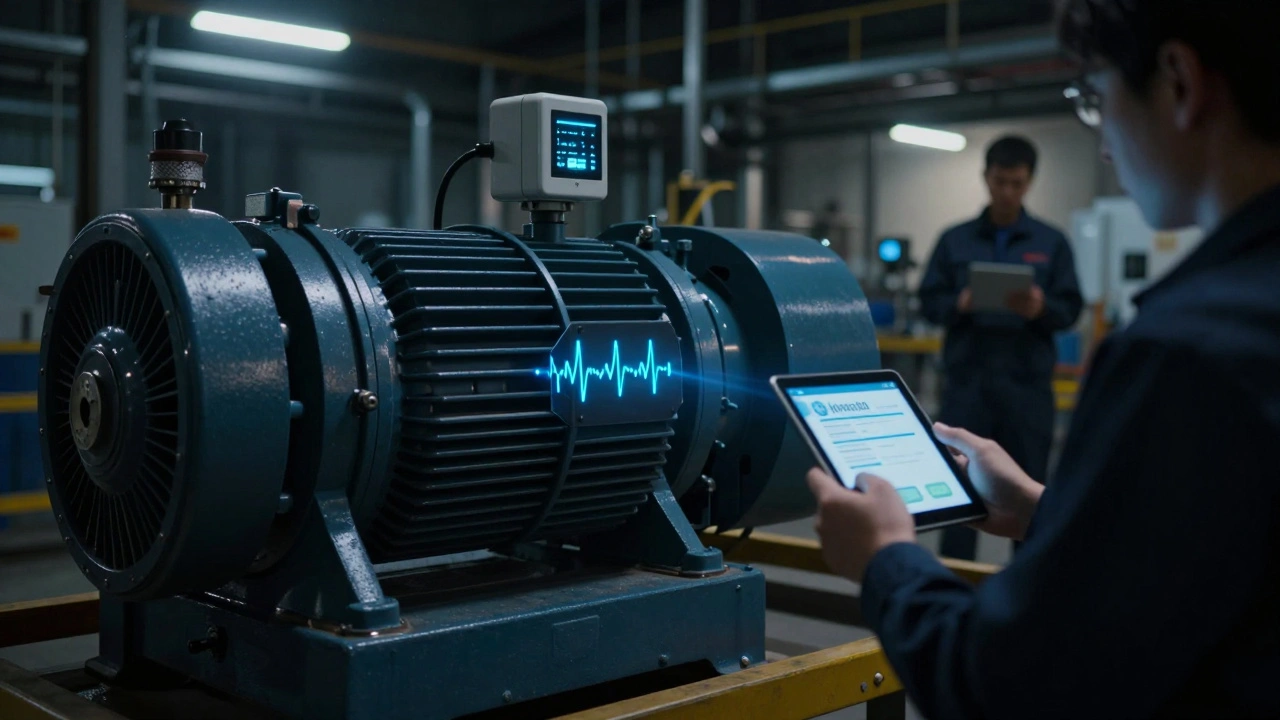

In 2026, the most advanced factories don’t just automate-they anticipate. Edge AI sensors on rotating pumps, conveyor belts, and CNC machines monitor vibration, temperature, and sound. They don’t wait for a breakdown. They predict it. A study from a major automotive supplier showed edge-based predictive maintenance caught 95% of failures 48 to 72 hours before they happened. That’s not a nice-to-have. It’s a $2.3 million savings per year in avoided downtime. One chemical plant cut unplanned outages by 40% and extended equipment life by 25% just by installing edge AI nodes. Quality control is another win. In food packaging, a single contaminated product can trigger a recall. Edge AI vision systems scan 1,200 packages per minute, detecting microscopic flaws-dents, misprints, foreign particles-that human inspectors miss. And because the model learns from new images right on-site, it gets better without uploading data.

Cars, Drones, and the Race for Instant Reaction

Autonomous vehicles don’t “think” in the cloud. They think in milliseconds. LIDAR, radar, and camera data from a self-driving car are processed locally by an NPU inside the vehicle. That’s why your car can swerve around a child on a bike before you even see them. Trucks in logistics fleets now use edge AI to platoon-driving in tight formation with automatic braking and acceleration synced between vehicles. This isn’t just fuel-efficient. It cuts accident rates by 30% in fleet tests. Drones inspect power lines, oil rigs, and solar farms. Instead of sending back 4K video for human review, they analyze the footage on board. If a cracked insulator or corroded bolt is found, the drone flags it and sends a summary-not hours of footage. That saves bandwidth, time, and money.Healthcare That Doesn’t Wait for Internet

In rural clinics or emergency ambulances, internet access is unreliable. But patient data can’t wait. Edge AI in wearable ECG monitors now detects atrial fibrillation in real time. If the system spots an anomaly, it alerts the paramedic on the spot-not a hospital server 200 miles away. Portable ultrasound devices with embedded AI can now estimate heart function or detect fluid in the lungs during a single scan. The model runs locally, so it works on a mountain trail or in a disaster zone. No cloud. No delay. Just life-saving insight.Security, Retail, and the Invisible AI Around You

Your local store doesn’t need you to scan a barcode. Cameras with edge AI detect when a shelf is low, recognize loyal customers as they walk in, and even notice if someone is loitering near the electronics section. All of this happens in under 50 milliseconds-no server, no lag. Smart checkout systems now use computer vision to identify items as you place them in a cart. You walk out. The system charges your account. No lines. No scanners. Just AI watching silently, locally. In public spaces, cameras with edge AI detect unattended bags, identify aggressive behavior, or track crowd flow-all without recording or uploading video. Privacy is preserved because raw footage never leaves the device.

The Future: AI That Acts, Not Just Reacts

The next phase isn’t just intelligence at the edge. It’s autonomy. We’re seeing the rise of agentic AI-systems that don’t just analyze, but decide and act. Imagine a smart grid that doesn’t just detect a power surge, but reroutes electricity across neighborhoods in real time. Or a warehouse robot that reorders its own parts before it runs out. These aren’t distant dreams. They’re being tested now. Hybrid architectures are key. Edge handles the urgent-real-time decisions. The cloud handles the big picture-training new models, analyzing trends over months, updating software. Together, they’re more powerful than either alone.Challenges? Yes. But They’re Solvable

Edge AI isn’t perfect. Managing thousands of devices across different hardware types is messy. Updating models without internet is tricky. Security at the edge needs to be as tight as in the cloud. But solutions are here. Platforms like Red Hat’s Edge and Dell’s Edge Insights now offer centralized management for distributed edge nodes. Over-the-air updates work even offline. Security is baked into the hardware-encrypted boot, secure enclaves, and hardware-based isolation. The biggest barrier isn’t tech. It’s mindset. Companies still think AI means servers and data centers. But the future is small, silent, and everywhere.What This Means for You

If you’re in manufacturing, your next upgrade isn’t a robot-it’s a sensor that predicts failure. If you’re in retail, your next innovation isn’t an app-it’s a camera that knows your customers better than your loyalty program. If you’re in healthcare, your next tool isn’t a machine-it’s a wearable that saves lives before an ambulance arrives. Edge AI isn’t about making devices smarter. It’s about making them independent. It’s about intelligence that doesn’t need permission. That doesn’t need Wi-Fi. That doesn’t wait. In 2026, the most powerful AI isn’t in a data center. It’s in your pocket. In your car. On your factory floor. And it’s already working.What is the main advantage of edge AI over cloud AI?

The main advantage is speed and independence. Edge AI processes data right on the device, cutting response time from seconds to milliseconds. It doesn’t need an internet connection, works offline, uses less power, and keeps sensitive data local-improving privacy and reliability.

Can edge AI work without any internet connection?

Yes. In fact, many edge AI systems are designed for offline use. Factories in remote areas, rural clinics, and autonomous vehicles rely on edge AI precisely because they can’t count on stable internet. Models are pre-loaded and updated locally when connectivity is available.

How do small language models (SLMs) differ from big ones like ChatGPT?

SLMs are much smaller-often under 1 billion parameters-trained for one specific task like reading error codes or explaining safety steps. They’re faster, use less power, and fit on edge devices. ChatGPT needs cloud servers and high bandwidth; SLMs run on a wristband or a sensor with no internet.

Why are NPUs better than GPUs for edge AI?

NPUs are built specifically for AI inference, not general graphics. They use 10-20 times less power than GPUs and deliver faster results for tasks like image recognition or voice processing. That makes them ideal for battery-powered or energy-sensitive devices like smartphones, sensors, and medical wearables.

Is edge AI secure?

Yes, often more secure than cloud systems. Since data never leaves the device, there’s less risk of interception or breaches. Modern edge hardware includes encrypted storage, secure boot, and hardware-based isolation-making it harder for attackers to tamper with the system.