AI Vendor Evaluation Scorecard

Vendor Evaluation Calculator

Assess vendors using the 12-point framework from the article. Set weights and scores for each dimension to determine if the vendor meets minimum requirements.

The minimum passing score is 75. For high-risk AI implementations, aim for 85+.

Results

Recommendation: Vendors scoring below 75 should be rejected unless critical factors justify the risk.

Buying an AI model isn’t like buying software or cloud storage. You’re not just paying for code-you’re paying for decisions, predictions, and sometimes, legal risk. If your procurement team is still using the same RFP templates from five years ago, you’re setting yourself up for failure. By 2026, organizations that don’t have a dedicated AI procurement playbook are seeing 37% more vendor disputes and paying up to 70% more in hidden costs. This isn’t theory. It’s what happened at a major U.S. hospital system that signed a contract for a diagnostic AI tool without defining who owned the model after fine-tuning it with their patient data. Two years later, they couldn’t switch vendors because the original provider claimed ownership of the improved version. That’s the kind of mistake a good playbook prevents.

Why Traditional Procurement Fails with AI

Most procurement teams are trained to buy things with clear specs: 100 GB RAM, 2-year warranty, 99.9% uptime. AI doesn’t work like that. An AI model isn’t a static product-it’s a living system that learns, drifts, and changes based on new data. A vendor might deliver a model that’s 95% accurate on day one, but if it’s trained on outdated data, that accuracy could drop to 72% in six months. Traditional contracts don’t account for that. They focus on delivery, not evolution. The result? Organizations end up with AI systems that don’t improve, can’t be audited, and lock them into vendors who control the data and the model. The Department of Transportation found that 65% of AI solutions evaluated in 120-day government procurement cycles were already outdated by the time the contract was signed. That’s not inefficiency-it’s obsolescence by design.Vendor Evaluation: The 12-Point Framework

Forget asking vendors for a list of features. Instead, use a weighted scoring system based on six core dimensions:- Data governance-Do they document where training data came from? Can you audit it? Do they use synthetic data to mask bias?

- Model transparency-Can they explain how the model makes decisions? Do they provide feature importance scores or SHAP values?

- Bias mitigation-Have they tested for demographic disparities? Can they show fairness metrics across gender, race, age groups?

- Security posture-Are models encrypted in transit and at rest? Do they follow NIST AI Risk Management Framework 1.1?

- Change management-Can they update the model without breaking your integration? Do they offer version control and rollback options?

- Knowledge transfer-Will they train your team? Do they document model architecture, training pipelines, and failure modes?

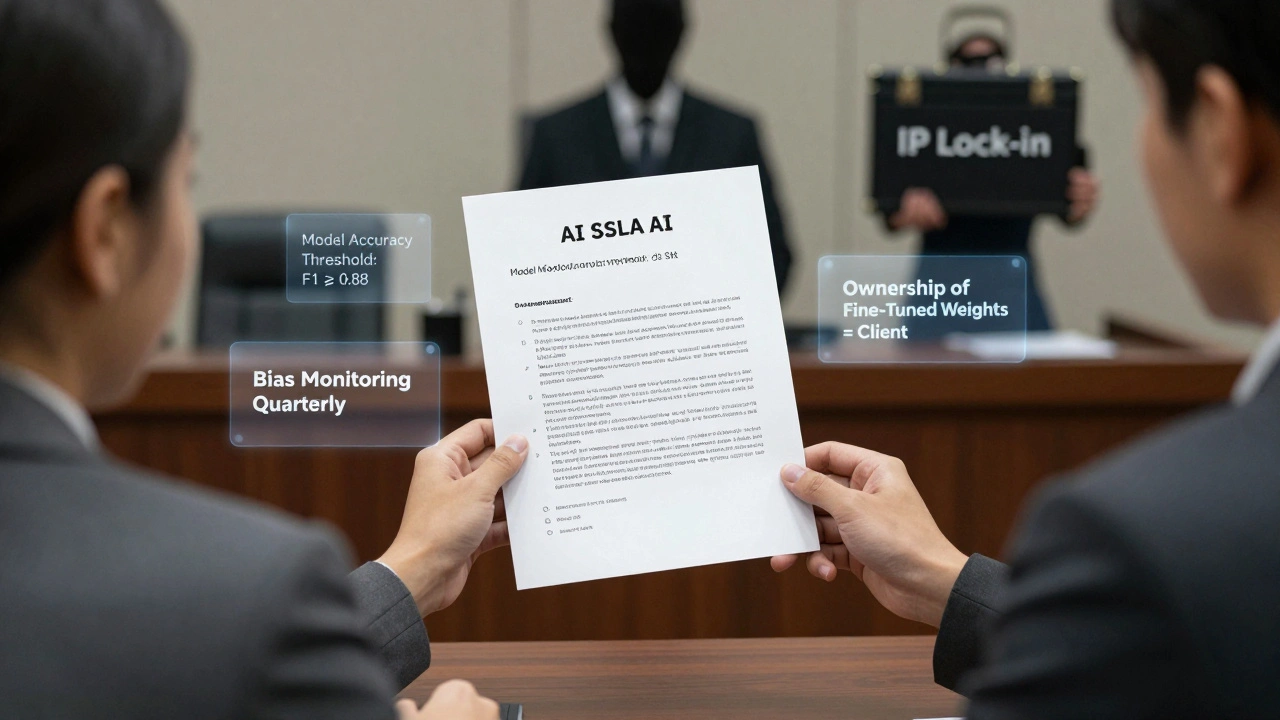

SLAs That Actually Work for AI

Your SLA can’t just say “99% uptime.” AI doesn’t have uptime-it has accuracy, fairness, and stability. Here’s what a real AI SLA looks like:- Model accuracy threshold-Minimum F1 score of 0.88 on your production data, retested monthly.

- Drift detection-Vendor must alert you within 48 hours if input data distribution shifts by more than 15% (measured via PSI or EMD metrics).

- Bias monitoring-Quarterly fairness audits across protected attributes, with thresholds defined in the contract (e.g., disparate impact ratio must stay above 0.8).

- Response latency-95% of predictions delivered under 200ms, with guaranteed scaling during peak loads.

- Model retraining cadence-Automatic retraining every 30 days, or sooner if drift exceeds thresholds.

- Penalties-Service credits of 15% for missed accuracy targets, 25% for unreported bias incidents.

- Exit support-If you terminate, vendor must deliver the model weights, training data lineage, and documentation in open format (e.g., ONNX or PMML).

- Security breach response-Vendor must notify you within 4 hours of any data leak or model tampering.

Intellectual Property: Who Owns What?

This is where most contracts blow up. You give a vendor your proprietary data. They train a model. Then they say, “We own the model.” But you paid for it. And you fed it your secrets. Who owns the output? The playbook answer is simple: you own everything that comes from your data. Here’s how to write it:- Input data-You retain full ownership. Vendor cannot use it for training other clients’ models.

- Base model-Vendor retains ownership of the original architecture (e.g., a fine-tuned Llama 3). But they must grant you a perpetual, royalty-free license to use, modify, and deploy it.

- Derivative works-Any model fine-tuned using your data, or improved based on your feedback, belongs to you. This includes weights, prompts, evaluation metrics, and training logs.

- Output-All predictions, classifications, or decisions generated by the model are your property. Vendor has no claim.

- Reverse engineering-Vendor cannot reverse-engineer your fine-tuned model to recreate it for competitors.

Implementation: Don’t Build a Separate Process

The biggest mistake? Creating a standalone AI procurement process. Microsoft’s playbook found that 28% more AI projects failed when handled as separate tenders. Instead, embed AI requirements into your next ICT contract renewal. If your ERP system is up for renewal in Q3, add a clause: “Any new module must be compatible with AI model integration and support model versioning via API.” Start small. Pick one high-impact, low-risk use case-like automating invoice classification or flagging fraudulent claims. Run a 90-day pilot. Document everything: what worked, what broke, how the model changed over time. Use that as your playbook template. Your team needs training too. Procurement staff should understand basic AI concepts: supervised vs. unsupervised learning, precision vs. recall, model drift. Don’t wait for a data scientist to explain it-use Microsoft’s free 4-hour AI procurement primer. It’s enough to get you through vendor demos.

The Future Is Agentic

By 2027, AI procurement won’t be done by humans. It’ll be done by agentic systems-AI tools that can evaluate vendors, negotiate terms, and monitor SLAs autonomously, while keeping humans in the loop for strategic decisions. Infosys BPM’s 2026 playbook shows these systems cut evaluation cycles by 32% without sacrificing compliance. But you don’t need to wait. Start today. Inventory your next 10 contracts. Flag which ones could include AI. Talk to legal. Bring in your data team. Build your first checklist. The EU AI Act and U.S. Executive Order 14110 are coming. You’ll be forced to comply. Better to be ready than fined.Who’s Doing This Right?

Manufacturers and financial services lead adoption-with 52% and 48% of firms using formal playbooks. Why? They’re regulated, data-rich, and under pressure to cut costs. Public sector lags at 18%, but that’s changing fast. The U.S. Department of Defense is now requiring AI procurement playbooks for all new contracts over $500K. GEP, Infosys BPM, and Microsoft are the main playbook providers. GEP leads in enterprise adoption with 28% market share. But you don’t need to buy one. Use Microsoft’s free public playbook (updated Q4 2025) as your starting point. It’s detailed, practical, and already used by 300+ organizations.What Happens If You Do Nothing?

You’ll pay more. You’ll get locked in. You’ll face audits you can’t pass. And when your AI model starts making biased hiring decisions or misdiagnosing patients, you won’t be able to prove you did due diligence. Gartner predicts organizations without AI procurement frameworks will face 25-35% higher total cost of ownership by 2027. That’s not a risk. That’s a financial liability.What’s the difference between an AI procurement playbook and a regular vendor contract?

A regular contract focuses on delivery, price, and support. An AI procurement playbook adds layers for model behavior, data ownership, bias monitoring, drift detection, and continuous learning. It treats the AI system as a living product, not a one-time purchase.

Can I use a cloud provider’s AI model without a playbook?

You can, but you’re taking huge risks. AWS, Azure, and GCP offer pre-built models, but their terms often claim ownership of any fine-tuned versions. Without a playbook, you can’t negotiate better terms. Use their templates as a base, then add your own IP and SLA clauses.

How do I know if a vendor is lying about their model’s fairness?

Ask for their bias test reports, not just claims. Demand to see the test datasets and metrics like disparate impact ratio, equal opportunity difference, and demographic parity. If they refuse, or if the data is incomplete, walk away. Real vendors have third-party audits.

Do I need a lawyer to draft an AI procurement contract?

Yes-but not just any lawyer. You need someone trained in AI IP law. Most general counsel don’t understand model weights, fine-tuning, or derivative works. Use the playbook as a checklist, then hire an AI-specialized attorney to review. The cost is less than one lawsuit.

What’s the fastest way to start implementing an AI procurement playbook?

Start with your next cloud contract renewal. Add three clauses: 1) Ownership of fine-tuned models belongs to you, 2) Vendor must provide monthly drift and bias reports, 3) You can export model weights in open format at termination. That’s your playbook in three lines.